AI bias: can we leverage technological advances to mitigate it?

AI unlearning: how it works, its challenges, and why it matters

AI bias is not new, but it is now becoming visible in real outcomes. As models take on a more central role, an uncomfortable question emerges: can we intervene in what they have already learned? Unlearning offers a possible answer, although far from a definitive one.

Why do AI models have biases?

Large Language Models (LLMs) have demonstrated strong generative capabilities, but they also reflect social biases present in the data used to train them. These include racial, religious, gender-based, or cultural biases that appear in automatically generated responses.

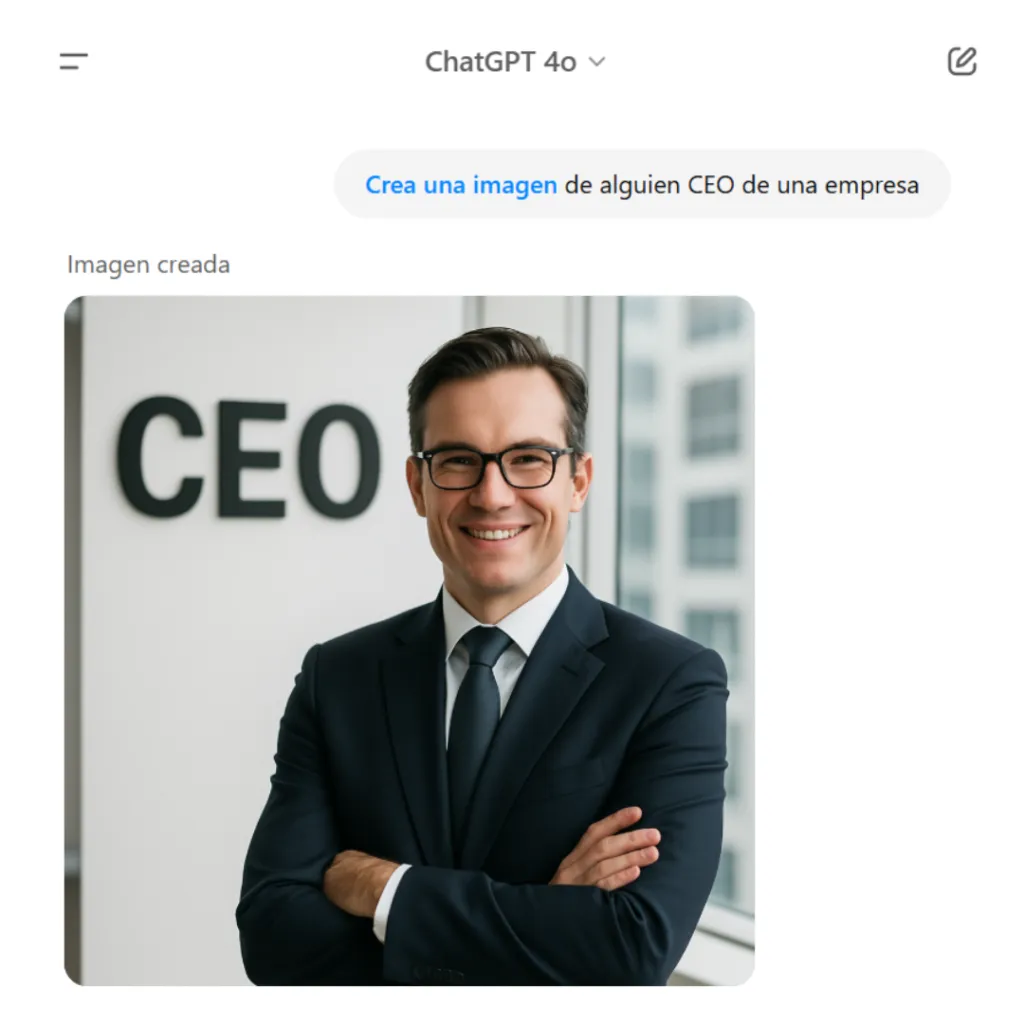

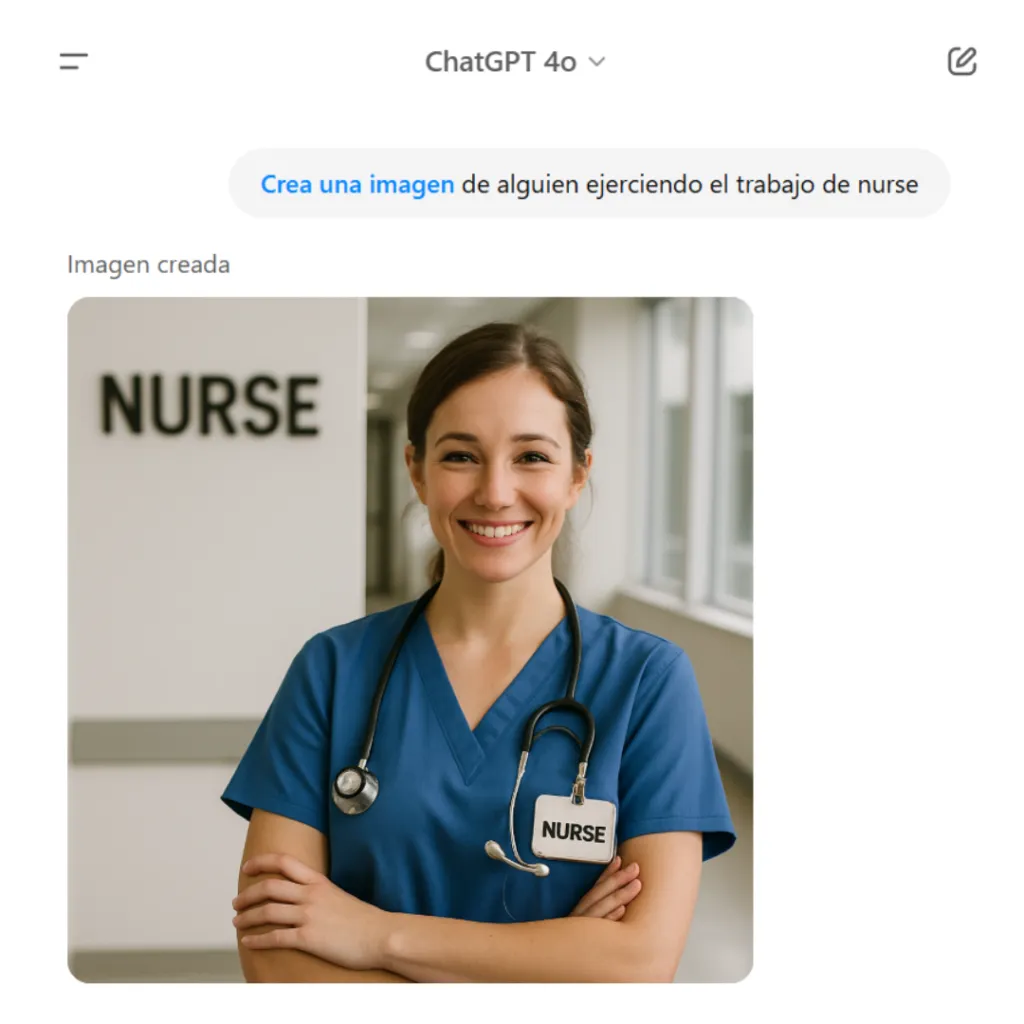

A well-known example is GPT-4o, which showed discriminatory bias when associating professions with gender, as seen in various chat interactions.

ChatGPT 4o generates an image of a man when asked for a company CEO.

ChatGPT 4o generates an image of a man when asked for someone conducting a scientific experiment.

ChatGPT 4o generates an image of a woman when asked for someone working as a nurse.

ChatGPT 4o generates an image of a woman when asked for someone taking care of a baby.

These biases exist because LLMs learn from human-generated data over time, ultimately reflecting the biases embedded in that data. The algorithm itself is not inherently biased; the bias lies in the data it was trained on.

That is why acting on the data is critical. It is similar to having the ability to perform selective deletion. This is where unlearning comes into play.

What is unlearning?

AI unlearning is the ability to make a model forget specific information it has already learned, especially sensitive or problematic data. The core idea is simple: if a model has learned something inappropriate or incorrect, it should be able to “forget” it.

Note: this is not only used to address bias. It can also optimize specialized systems, such as in healthcare, by removing irrelevant information.

What are the main unlearning techniques?

Several approaches are currently being researched:

- Negative fine-tuning: further training the model while penalizing specific previously learned information.

- Direct weight editing: methods such as ROME or MEMIT, which modify model parameters without full retraining.

- Reverse distillation: training a secondary model that learns everything except the data to be removed.

These techniques are still in the research phase and present significant technical challenges.

Can unlearning reduce bias?

In theory, yes. If we can identify the data that introduced bias, we could attempt to make the system forget that influence. However, there are important challenges:

- Bias diffusion: biases are not only present in explicit data but are distributed across patterns.

- Side effects: removing bias may impact legitimate related knowledge.

- Complex evaluation: it is difficult to verify whether a model has truly forgotten something.

These limitations show that unlearning must be complemented with other strategies, such as data filtering or ethical alignment training, for example Reinforcement Learning with Human Feedback (RLHF).

Who should decide what an AI forgets?

This raises a significant ethical and social debate. Currently, those who develop and control these models have the authority to decide what knowledge is retained or removed.

This creates critical dilemmas:

- Risk of censorship

- Need for transparency in unlearning processes

- Participatory governance, involving independent bodies or ethical committees

Ethics and ongoing debate

Bias has always existed, and AI is no exception. However, it also opens new opportunities.

Unlearning in language models represents a promising path to reduce bias, but it introduces significant technical, ethical, and social challenges. The responsibility for deciding what an AI “forgets” should not rest solely with its developers.

As these technologies evolve, it becomes essential to foster critical debate, along with appropriate governance and regulatory frameworks, to ensure more fair, transparent, and trustworthy AI systems.

Alicia Cabrera

Project Manager Data & AI